Same LLM, Better Web Search, Better Outcome

LI Test

URL CopiedLI Test

When LLMs search the web, the retrieved web content adds to the LLM context window, filling it up with every search round. That growing context drives up token consumption, cost, and latency. We tested how Claude Sonnet 4.6 performs when it uses its own built-in web search compared to when it searches through a compact external API (in this case, the You.com Search API). In both instances we used Claude Sonnet 4.6 with identical prompts across 50 queries spanning four complexity tiers, all running with default LLM settings.

You.com web search results compared to Claude’s built-in web search.

How LLMs Use Web Search

When you ask an LLM a question that requires current information—what is today's stock price, who won last night's game, what did the latest earnings call say—it first reasons that it needs to go find something. It issues a search, receives web content, and then reasons again over that content to produce a cited answer. These are fundamentally two different cognitive steps, and they happen whether the LLM uses a built-in search tool or an external API like You.com.

This behavior spans a wide range of queries. On one end, a simple lookup: "What is the current price of AAPL?" The LLM breaks once to search and returns. On the other end, deep research: "Which pharmaceutical companies have active GLP-1 drugs in Phase 3 trials?" The LLM may search 10 to 20 times, comparing clinical trial registries, recent filings, and press releases. In both cases, every piece of web content the LLM retrieves becomes context it has to carry, and that context directly determines how many tokens you pay for.

Everything above assumes the LLM controls the search, and that is how most workflows work today. But there is another way. The developer can control the search directly, decide what to look for, and hand curated content to the LLM. Both patterns are valid, but they trade off simplicity against control. Here is how each one works.

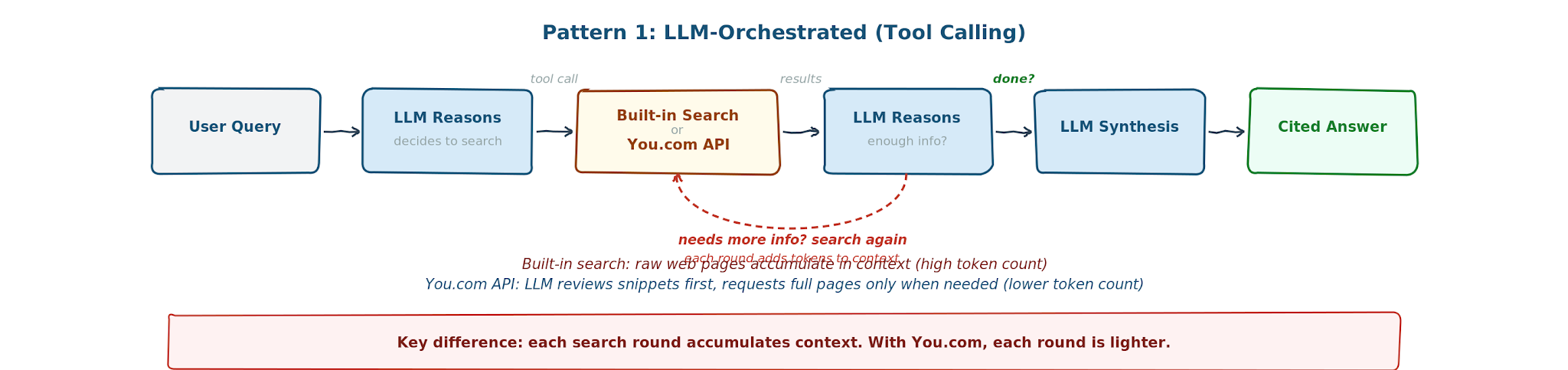

Pattern 1: LLM-Orchestrated (Tool Calling)

In this pattern, the LLM Claude Sonnet 4.6 controls the search. It decides when to search, what to search for, and calls a web search tool to go get it. This is how Claude works with its built-in web search. This is also how Claude works with You.com search, as another web search tool it can call instead of its built-in web search.The You.com search returns snippets first. Claude reviews them and decides whether the snippets are enough or whether it needs full page content through You.com's livecrawl. This gives Claude control over how much context it pulls in, rather than always ingesting raw web pages.

Example: "Write a story about Obama." The LLM decides to search for "obama facts" or "barack obama." You do not control the search terms; the LLM does. This is the simpler integration path.

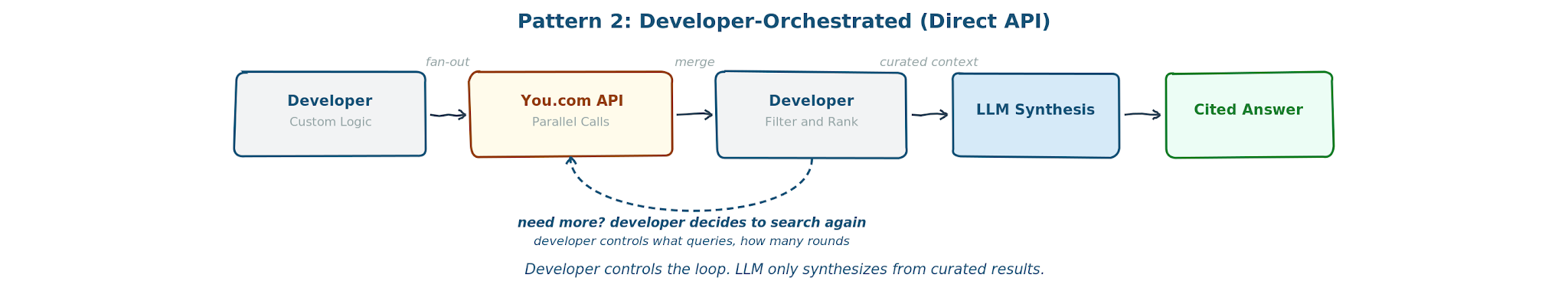

Pattern 2: Developer-Orchestrated (Direct API)

In this pattern, the developer controls the search directly. This means you can fan out 10 parallel queries, apply your own filters and ranking, and feed curated context to the LLM. The LLM only does synthesis, never search.

Example: Same question: "Write a story about Obama." But now the developer controls the search directly. They fan out 10 parallel queries to You.com ("obama early life," "obama presidency highlights," "obama post-presidency"), filter and rank the results, and hand curated context to the LLM. The LLM writes the story from pre-selected sources.

This article focuses on the first pattern, the most common way teams use LLMs with web search today. The second pattern, however, unlocks even more flexibility via the You.com Search API.

In Pattern 1, built-in web search is convenient but the search is coupled to the LLM provider. The developer has no control over what gets retrieved, how many searches the LLM fires, or how much context accumulates in the conversation window. You.com gives the same simplicity with better economics, full observability, and provider independence.

What the Data Shows: Claude + You.com Search Wins on Every Metric

We ran 50 real-world queries across four complexity tiers on Claude Sonnet 4.6 with default Anthropic settings and then ran the same queries on Claude Sonnet 4.6 using the You.com Search API.

The results are consistent across every tier.

- Reduction in token bloat. Built-in search injects raw web content into the conversation context, and each search round adds to it. With You.com, Claude Sonnet 4.6 reviews compact snippets first and only pulls full pages when it needs them. The result: 61% fewer tokens per query on average (47k vs. 121k). To put that in perspective, 121k tokens is roughly 90,000 words of web content Claude Sonnet 4.6 has to carry for a single question.

- Lower cost. First, consider the search fees themselves: You.com charges half what Anthropic charges per search call. Second, and more significantly, LLM providers bill per input token. Every extra word of web content sitting in the context window costs money at inference time. When native search pulls 2.6x more content into context, that compounds directly into the invoice. Bottom line: 60% lower cost per query with You.com.

- Faster speed. Fewer search rounds and smaller payloads mean Claude Sonnet 4.6 spends less time waiting for results and less time processing them. You.com averaged 52 seconds per query vs. 87 seconds for native, 40% faster end to end.

- Quality holds or improves. Despite sending less content to Claude Sonnet 4.6, You.com produced higher-quality answers in 30 of 47 queries (average score 16.7/20 vs. 15.6/20). The scoring methodology is detailed in the "How We Measured Quality" section below. The short version: a separate LLM (GPT-5.4) blindly judged both answers for each query without knowing which search path produced them.

- Full observability. With You.com, the search is a standard tool call in the API request. The full payload appears in the API response: every source URL, every snippet, every token count. Developers can log it, debug it, and audit exactly what content Claude Sonnet 4.6 consumed. In contrast, built-in search is opaque— the final answer is visible but the raw web content Claude Sonnet 4.6 ingested to produce it is not.

- No LLM lock-in. You.com is a plain REST API that works with any LLM. Switch providers without rebuilding the search integration. Zero data retention on Team/Enterprise plans and SOC 2 certified.

This benchmark used the Claude API with the You.com Search API as a web search tool, but the same economics apply anywhere Claude calls You.com for search, including Claude Code and Claude Cowork connected to You.com Search via MCP. If your team is running web search queries through Claude today, switching to You.com reduces your bill immediately.

TLDR: developers using Claude with the You.com Search API get better answers faster and at less than half the cost.

Benchmark Results by Complexity Tier

The benchmark we ran included 50 queries— including news, finance, sports, healthcare, tech, regulation, and science—across four different complexity tiers. Of the 50, 47 completed (3 timed out on the native path). The per-query cost includes LLM inference plus search fees.

- Simple (12 queries). Single-fact lookups. One search, one answer. E.g., "What is the current price of NVIDIA stock?" or "What were the Powerball winning numbers?"

- Moderate Synthesis (18 queries). Requires reading and combining information from a few sources. E.g., "Summarize the EU AI Act enforcement updates in 2026" or "What are the latest developments in weight loss drugs?"

- Complex Multi-Source (11 queries). Cross-referencing multiple entities or datasets. E.g., "Compare the latest inflation rates, interest rates, and GDP growth for the US, EU, and Japan" or "What are the current market caps and PE ratios for the Magnificent 7?"

- Deep Research (6 queries). Exhaustive enumeration requiring 10-20+ searches. E.g., "List all commercial nuclear fusion companies that have raised over $100M" or "Which cities worldwide have implemented congestion pricing?"

| Tier | Queries | Native Cost | You.com Cost | Cost Saved | Native | You.com | Faster | Token Ratio | Native Searches | You.com Searches |

|---|---|---|---|---|---|---|---|---|---|---|

| Simple | 12 | $0.09 | $0.03 | 63% | 22s | 11s | 51% | 3.0x | 2.0 | 1.4 |

| Moderate | 18 | $0.25 | $0.09 | 64% | 61s | 39s | 36% | 2.8x | 4.1 | 2.9 |

| Complex | 11 | $0.70 | $0.28 | 60% | 129s | 69s | 46% | 2.5x | 11.3 | 7.5 |

| Deep Research | 6 | $1.49 | $0.63 | 58% | 221s | 146s | 34% | 2.4x | 21.7 | 12.3 |

| OVERALL | 47 | $0.47 | $0.19 | 60% | 87s | 52s | 40% | 2.6x | 7.5 | 4.8 |

Cost = average per query (LLM inference + search fees). Latency = average wall-clock time per query. Token Ratio = Native tokens / You.com tokens.

On average, built-in search fired 7.5 searches pulling 75 sources per query vs. 4.8 searches and 28 sources for You.com. The gap is widest on deep research queries.

Here is how the results break down across the four complexity tiers:

- Cost scales with complexity. Simple queries cost pennies on either path. The savings compound on complex and deep research queries, where built-in search can cost 5-10x more per query than simple ones.

- Fewer searches, same quality. You.com consistently uses fewer search calls across every tier. This is because Claude Sonnet 4.6 reviews snippets first and only requests full pages when needed, so each search round is more targeted.

- Deep research is where it matters most. Built-in search fires 21.7 searches per deep research query vs. 12.3 for You.com. That gap drives the token bloat, cost, and latency differences.

- The latency gap widens with complexity. Simple queries are fast on both paths. On complex and deep research queries, built-in search takes 2-3 minutes while You.com stays under 2.5 minutes.

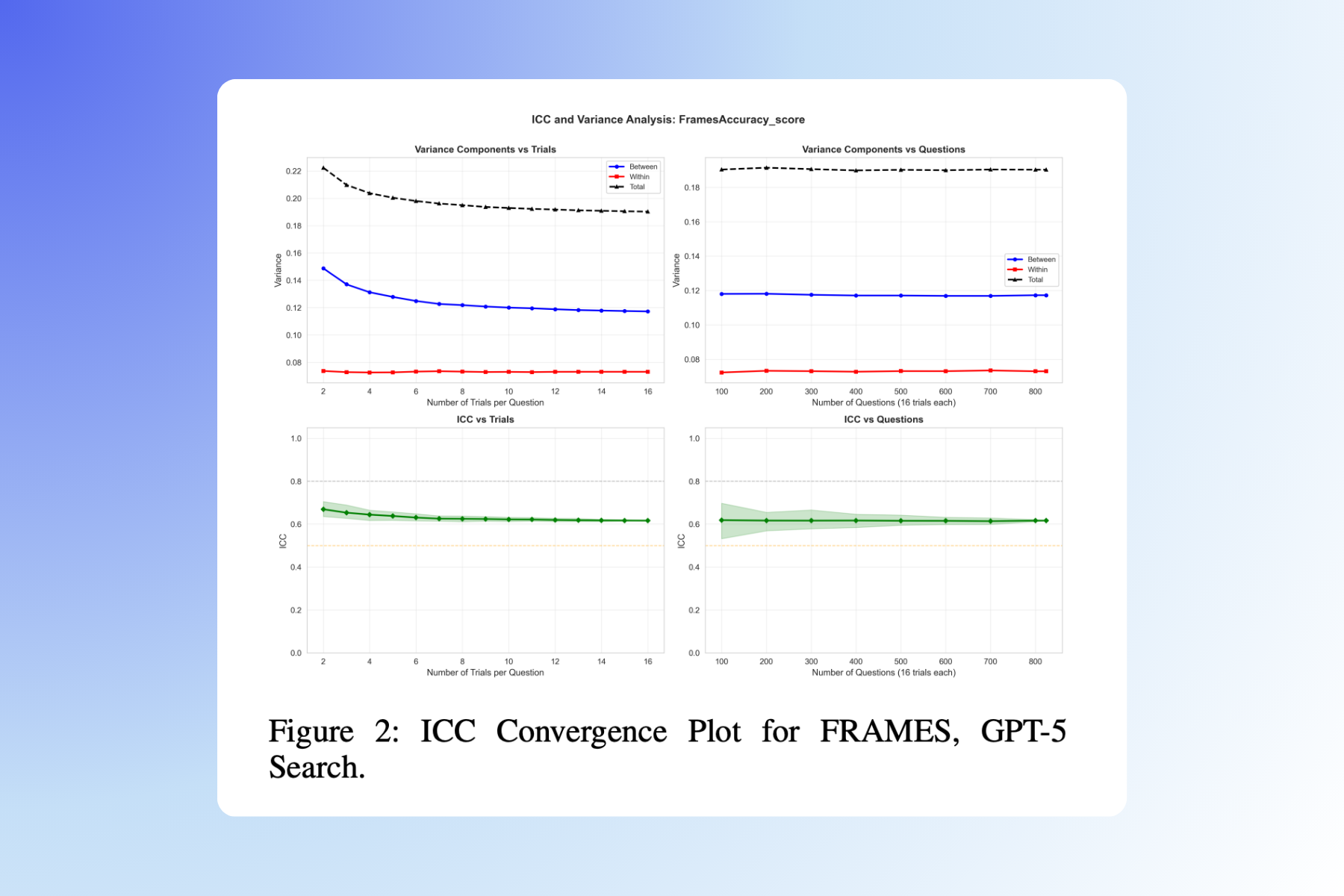

How We Measured Quality

Measuring answer quality for web search is tricky because there is no ground truth to compare against. The web is live, answers change daily, and what counts as a "good" answer depends on the question. Our approach: use a separate LLM (GPT-5.4) as a blind judge. For each query, the judge receives both answers side by side in randomized order, labeled only as "Answer A" and "Answer B." It does not know which came from You.com and which came from native search. We use a different model family as judge (GPT judging Claude outputs) to avoid any self-preference bias.

The judge scores each answer on four dimensions, each rated 1 to 5:

- Completeness (1-5): Does the answer address all parts of the question? A score of 1 means major aspects are missing; 5 means every part of the question is thoroughly addressed.

- Relevance (1-5): Is the information directly relevant to what was asked, or does it include tangential content? A score of 5 means everything in the answer serves the query.

- Specificity (1-5): Does the answer include concrete data points (numbers, dates, names) rather than vague generalities? A score of 5 means the answer is rich with verifiable specifics.

- Citation Quality (1-5): Are sources cited, and do they appear credible and traceable? A score of 5 means claims are backed by specific, identifiable sources.

The four scores sum to a maximum of 20 per answer. The judge also declares an overall verdict: of the 47 queries, which answer is better, or whether they are comparable.

| Native | You.com | Both | |

|---|---|---|---|

| Avg Score | 15.6 / 20 | 16.7 / 20 | - |

| Verdict | 14 wins | 30 wins | 3 comparable |

TLDR: You.com sends less content to Claude Sonnet 4.6, but the content it sends is more targeted. Claude Sonnet 4.6 reviews the snippets and only requests full pages when needed. This produces answers that are at least as good, and in most cases better, than what Claude Sonnet 4.6 produces when it ingests everything from built-in search.

Methodology Notes

Both paths used Anthropic's Messages API. Native search used Anthropic's web_search_20260209 tool with default settings, including code execution enabled. Code execution is a feature where Claude Sonnet 4.6 can write and run small programs to trigger additional searches dynamically. We left it on because that is what a developer gets out of the box when they add the web search tool to a Claude API call with no additional parameters.

Three of the 50 queries timed out on the native path. These were deep research and complex queries where built-in search triggered 15-20+ consecutive search rounds, causing the API connection to reset after three-to-five minutes of continuous processing. You.com completed those same queries successfully., but only scored the 47 that completed on both paths for a fair apples-to-apples comparison.

For completeness, we also ran this benchmark with code execution disabled. The results followed the same pattern: You.com delivered lower cost, fewer tokens, and comparable or better quality. However, code execution is Claude's default behavior, and disabling it limits Claude Sonnet 4.6's ability to dynamically trigger additional searches when needed. We recommend testing with default settings, which is what this report reflects.

Developers using Claude with the You.com Search API get better answers, faster, at less than half the cost. This applies whether you use the Claude API directly, or Claude Code and Claude Cowork connected to You.com Search via MCP. If your team is running web search queries through Claude today, switching to You.com reduces your bill immediately.

Try it yourself: We ship a self-contained Python toolkit with a side-by-side comparison runner, blind judge, and batch benchmark script. Add your API keys and run it against your own queries. Contact your You.com account team or visit you.com/platform to get started.

Appendix: The 50 Benchmark Queries

The full set of queries used in this benchmark, organized by complexity tier. These were selected to represent the range of real-world web search use cases: from simple lookups to deep multi-source research.

| # | Tier | Domain | Query | Status |

|---|---|---|---|---|

| 1 | Simple | Finance | What is the current price of NVIDIA stock? | ✔ |

| 2 | Simple | Sports | What were the final scores in yesterday’s NBA games? | ✔ |

| 3 | Simple | Finance | What is the current 30-year fixed mortgage rate in the US? | ✔ |

| 4 | Simple | Sports | Who won the most recent Formula 1 Grand Prix and what was the margin? | ✔ |

| 5 | Simple | Finance | What is the current USD to Japanese yen exchange rate? | ✔ |

| 6 | Simple | Finance | What was the closing price of the S&P 500 index today? | ✔ |

| 7 | Simple | Economics | What is the latest unemployment rate reported by the Bureau of Labor Statistics? | ✔ |

| 8 | Simple | Tech | What are the top 5 trending topics on GitHub this week? | ✔ |

| 9 | Simple | Crypto | What is the current Bitcoin price and 24-hour trading volume? | ✔ |

| 10 | Simple | Entertainment | What movies are currently number 1 and 2 at the US box office? | ✔ |

| 11 | Simple | Environment | What is the current air quality index in Los Angeles? | ✔ |

| 12 | Simple | General | What were the Powerball winning numbers in the most recent drawing? | ✔ |

| 13 | Moderate | Tech | What happened in tech news today? | ✔ |

| 14 | Moderate | Finance | Current S&P 500 price and market summary | ✔ |

| 15 | Moderate | Earnings | Latest NVIDIA earnings and stock performance | ✔ |

| 16 | Moderate | Healthcare | What are the latest developments in weight loss drugs? | ✔ |

| 17 | Moderate | Regulation | Summarize the EU AI Act enforcement updates in 2026 | ✔ |

| 18 | Moderate | Tech | Apple WWDC 2026 announcements | ✔ |

| 19 | Moderate | Science | Latest SpaceX Starship launch results | ✔ |

| 20 | Moderate | Geopolitics | Current status of the Ukraine-Russia conflict and recent peace negotiations | ✔ |

| 21 | Moderate | Healthcare | What new FDA drug approvals have been announced this month? | ✔ |

| 22 | Moderate | Environment | What are the latest wildfire conditions in California and what areas are affected? | ✔ |

| 23 | Moderate | Economics | Summarize the most recent Federal Reserve meeting minutes and rate decisions | ✔ |

| 24 | Moderate | Security | What major cybersecurity breaches have been reported in the past 30 days? | ✔ |

| 25 | Moderate | Real Estate | What is the current state of the US housing market in major metro areas? | ✔ |

| 26 | Moderate | Tech | What were the key announcements from the most recent Google I/O conference? | ✔ |

| 27 | Moderate | Healthcare | Current status of bird flu outbreaks and any human transmission cases | ✔ |

| 28 | Moderate | Tech | Latest developments in humanoid robotics from Boston Dynamics, Tesla, and Figure | ✔ |

| 29 | Moderate | Earnings | Summarize the most recent earnings calls for Microsoft, including revenue and cloud growth | ✔ |

| 30 | Moderate | Trade | What new tariffs or trade restrictions between the US and China this quarter? | ✔ |

| 31 | Complex | Automotive | Compare Tesla and BYD Q1 2026 delivery numbers and market share for the US market | ✔ |

| 32 | Complex | Sports | Who won the 2026 NBA draft lottery? | ✔ |

| 33 | Complex | AI | Compare pricing, context windows, and benchmarks of Claude 4.5, GPT‑5.4, and Gemini 2.5 | ✔ |

| 34 | Complex | Automotive | Top 5 best‑selling EVs in the US, Europe, and China this year, and how do lists differ? | ✔ |

| 35 | Complex | Economics | Compare latest inflation rates, interest rates, and GDP growth for the US, EU, and Japan | ✔ |

| 36 | Complex | Tech | Which major tech companies announced layoffs in 2026 and how many were affected? | ✔ |

| 37 | Complex | Climate | Compare carbon intensity, emissions reductions, and renewable investments of US, China, India | ✔ |

| 38 | Complex | Finance | Current market caps, PE ratios, and YTD returns for the Magnificent 7 tech stocks | ✔ |

| 39 | Complex | Entertainment | Compare quarterly revenue, subscribers, and content spending: Netflix, Disney+, Prime Video | timeout |

| 40 | Complex | Regulation | What US states have passed AI regulation bills in 2025–2026 and how do they differ from EU AI Act? | ✔ |

| 41 | Complex | Space | Compare safety records, launch cadence, and payload: SpaceX Falcon 9, Rocket Lab, Blue Origin | ✔ |

| 42 | Complex | Tech | What semiconductor fabs are under construction globally, who is building them, and when online? | ✔ |

| 43 | Deep Research | Policy | List every country that has banned deepfake political advertising in 2025–2026 | ✔ |

| 44 | Deep Research | Pharma | Which pharma companies have active GLP‑1 drugs in Phase 3 trials as of today? | ✔ |

| 45 | Deep Research | Business | Trace the ownership chain of Twitter/X from founding through today | ✔ |

| 46 | Deep Research | Finance | What sovereign wealth funds have invested in US AI companies in the past 12 months? | ✔ |

| 47 | Deep Research | Energy | List all commercial nuclear fusion companies that have raised over $100M in funding | ✔ |

| 48 | Deep Research | Urban | Which cities worldwide have implemented congestion pricing for vehicles as of 2026? | ✔ |

| 49 | Deep Research | Legal | Major antitrust actions against Amazon, Google, Apple, Meta, Microsoft since Jan 2025 | timeout |

| 50 | Deep Research | AI Safety | All AI foundation model governance frameworks published or in force in 2025–2026 | timeout |

Total Cost (47 completed queries): Native $22.25 vs. You.com $8.90

Cost breakdown: LLM inference at $3/1M input tokens + $15/1M output tokens (Claude Sonnet 4.6 pricing) + search fees (You.com: $5 per 1,000 queries; Anthropic native: $10 per 1,000 searches). Average per-query cost: Native $0.47, You.com $0.19.

Featured resources.

.webp)

Paying 10x More After Google’s num=100 Change? Migrate to You.com in Under 10 Minutes

September 18, 2025

Blog

September 2025 API Roundup: Introducing Express & Contents APIs

September 16, 2025

Blog

You.com vs. Microsoft Copilot: How They Compare for Enterprise Teams

September 10, 2025

Blog

All resources.

Browse our complete collection of tools, guides, and expert insights — helping your team turn AI into ROI.

.jpg)

What the Heck Are Vertical Search Indexes?

Oleg Trygub

,

Senior AI Engineer

January 20, 2026

Blog

.jpg)

The Agent Loop: How AI Agents Actually Work (and How to Build One)

Mariane Bekker

,

Head of Developer Relations

January 16, 2026

Blog

.jpg)

Before Superintelligent AI Can Solve Major Challenges, We Need to Define What 'Solved' Means

Richard Socher

,

You.com Co-Founder & CEO

January 14, 2026

News & Press

AI Search Infrastructure: The Foundation for Tomorrow’s Intelligent Applications

Brooke Grief

,

Head of Content

January 9, 2026

Blog

How to Evaluate AI Search in the Agentic Era: A Sneak Peek

Zairah Mustahsan

,

Staff Data Scientist

January 8, 2026

Blog

You.com Hackathon Track

Mariane Bekker

,

Head of Developer Relations

January 5, 2026

Guides

Randomness in AI Benchmarks: What Makes an Eval Trustworthy?

Zairah Mustahsan

,

Staff Data Scientist

December 19, 2025

Blog

How to Evaluate AI Search for the Agentic Era

Zairah Mustahsan

,

Staff Data Scientist

December 18, 2025

Guides